BSH employees cultivate continuous learning as a mindset

As one of the worldwide leading manufacturers of home appliances, we’re always on the move. Whether it’s innovative technologies, the latest work methods, exciting seminars or our involvement in the protection of natural resources. We have a lot of stories to tell. Here on our corporate blog these stories have their place. Have fun reading and discovering them!

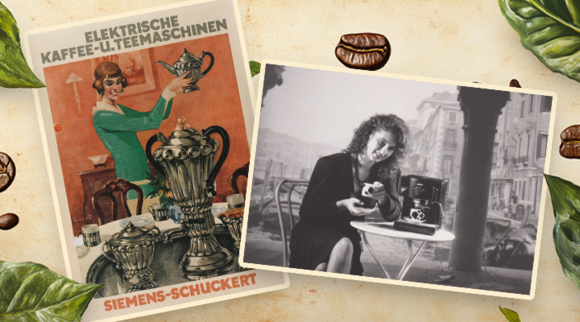

Coffee is one of the world's most popular hot drinks because freshly ground coffee is a treat at any time of day. We wanted to know when the first electric coffee machine was invented and how the BSH brands have shaped coffee enjoyment over the years. Here are the answers.

Learning, growing, supporting: At BSH, the Global Graduate Accelerator is a unique program that offers Graduates a holistic career start. On the one hand, it includes experience-based learning through training and workshops, mentoring and networking. On the other hand, it means working at three locations – the BSH Headquarters in Munich, a German factory, and the opportunity to embark on a three-month rotation abroad, providing invaluable insights and experiences. From embracing new cultures to working on cutting-edge projects, our program is designed to foster both professional and personal development. Meet Chloe Anderton and Rucheng Wang. The two women with American and Chinese roots have worked in Zaragoza, Spain, and Milan, Italy. They are sharing their journeys with us to show how working abroad with BSH means growing personally and professionally.

At BSH, talented people from diverse backgrounds strive towards one common goal: Improving the quality of life at home through our exceptional brands, high-class products, and outstanding solutions. The diversity of our people is one of the keys to our success, making us more innovative, resilient, and creative. Through our campaign “Nominate Your Diversity Champion”, we highlight those who actively foster Diversity, Equity and Inclusion (DEI) in their daily working lives, and this time, we want to introduce you to a whole team that has been nominated by their peers. Our Category Markets Management Food team from the Product Division Consumer Products is a true role model for cultural diversity. Based in our headquarters in Munich (Germany) and Istanbul (Türkiye), the team consists of ten people from six different nationalities. Hence, different communication styles, work approaches or conflict resolution methods come together, but the team knows how to successfully navigate this and turns their different perspectives into their strengths. Read more about the benefits they see in embracing DEI and learn about their approaches to create a safe space in our interview below.

At BSH, we recognize the strength, resilience, and diverse abilities of our employees worldwide and aim at creating a work environment in which everyone feels seen, heard, and valued. To really achieve this, we have to be aware of our peoples’ needs and encourage them to express them openly – particularly if they have special demands. Equity and inclusion are among our core values, which is why we want to raise awareness for people with disabilities and how you can support them. To create this blog post, we have talked to colleagues from around the world. One of the stories that impressed us particularly is the story of Avi Arditi, Key Account Manager at BSH Israel.

Dive into the heart of Diversity, Equity, and Inclusion (DEI) at BSH with Elif Haliloğlu Ürkmez, our new Diversity Champion. Discover the transformative power of storytelling, the value of every individual voice, and the impactful moments that define a company’s journey towards true inclusivity. Whether you’re curious about BSH’s DEI evolution or seeking insights on how to start fostering DEI in your workplace, Elif’s insights are a source of inspiration. Learn more about change, growth, and the future of workplace inclusion.

Bosch introduces the new Series 6 induction cooktops, bringing new convenience in a new design to the kitchen. With the PerfectFry Plus frying sensor, consumers can achieve optimal results in many dishes with over 11 selectable temperature levels. With the new favorites button, quick access can also be configured with two personal favorite functions. In addition, accessories that are precisely adapted to the size of the Flex zones make frying even more convenient. Michael Hegendörfer, Product Manager in the Surface Cooking & Ventilation Division, explains exactly which functions support consumers in their everyday cooking.

Siemens unveils the first IQ700 oven with automatic food recognition: Artificial intelligence makes baking, roasting and braising easier. At market launch, the networked appliance will be able to identify around 40 different dishes, and updates will steadily expand this number. All consumers have to do is put the dish in the oven, close the door and give a final "OK". Clemens Hepperle, Product Owner for Oven Cooking in the Europe region, explains in an interview exactly how this works.

With the new Series 6 & 8 washer dryers, BOSCH combines a dryer and washing machine with state-of-the-art technology. In this way, consumers not only save space, but also detergent, ironing effort and energy consumption. In addition, the extra-large washing drum offers space for up to 12kg of laundry, so that even families and households with a high volume of laundry can wash all their laundry in just a few wash cycles. Florian Zoellner, Product Owner in the Laundry Care division, explains in an interview how all this works with the help of automatic dosing and the Iron Assist program.

Stock up, have more fresh fruits and vegetables at home, shop only once a week. Siemens is teaming up with kitchen furniture manufacturers to help create space in built-in kitchens. This fall, Siemens launches a fully integrable fridge freezer combination for an almost 2-meter-high niche. Jörg Jaugstetter, Product Manager in the Cooling Division, talks about the development, the uniqueness and expectations of the new helper in the kitchen.

BSH Hausgeräte GmbH, with a total turnover of some EUR 15.6 billion and 62,000 employees in 2022, is a global leader in the home appliance industry. The company’s brand portfolio includes eleven well-known appliance brands like Bosch, Siemens, Gaggenau and Neff as well as the ecosystem brand Home Connect and service brands like Kitchen Stories. BSH produces at 40 factories and is represented in some 50 countries. BSH is a Bosch Group company.